329 KiB

| bibliography | |

|---|---|

|

::: titlepage Tracy Profiler

The user manual

Bartosz Taudul <wolf@nereid.pl>

2025-07-23 https://github.com/wolfpld/tracy :::

Quick overview

Hello and welcome to the Tracy Profiler user manual! Here you will find all the information you need to start using the profiler. This manual has the following layout:

-

Chapter 1, A quick look at Tracy Profiler, gives a short description of what Tracy is and how it works.

-

Chapter 2, First steps, shows how you can integrate the profiler into your application and how to build the graphical user interface (section 2.3). At this point, you will be able to establish a connection from the profiler to your application.

-

Chapter 3, Client markup, provides information on how to instrument your application, in order to retrieve useful profiling data. This includes a description of the C API (section 3.13), which enables usage of Tracy in any programming language.

-

Chapter 4, Capturing the data, goes into more detail on how the profiling information can be captured and stored on disk.

-

Chapter 5, Analyzing captured data, guides you through the graphical user interface of the profiler.

-

Chapter 6, Exporting zone statistics to CSV, explains how to export some zone timing statistics into a CSV format.

-

Chapter 7, Importing external profiling data, documents how to import data from other profilers.

-

Chapter 8, Configuration files, gives information on the profiler settings.

Quick-start guide

For Tracy to profile your application, you will need to integrate the profiler into your application and run an independent executable that will act both as a server with which your application will communicate and as a profiling viewer. The most basic integration looks like this:

-

Add the Tracy repository to your project directory.

-

Tracy source files in the

project/tracy/publicdirectory. -

Add

TracyClient.cppas a source file. -

Add

tracy/Tracy.hppas an include file. -

Include

Tracy.hppin every file you are interested in profiling. -

Define

TRACY_ENABLEfor the WHOLE project. -

Add the macro

FrameMarkat the end of each frame loop. -

Add the macro

ZoneScopedas the first line of your function definitions to include them in the profile. -

Compile and run both your application and the profiler server.

-

Hit Connect on the profiler server.

-

Tada! You're profiling your program!

There's much more Tracy can do, which can be explored by carefully reading this manual. In case any problems should surface, refer to section 2.1 to ensure you've correctly included Tracy in your project. Additionally, you should refer to section 3 to make sure you are using FrameMark, ZoneScoped, and any other Tracy constructs correctly.

A quick look at Tracy Profiler

Tracy is a real-time, nanosecond resolution hybrid frame and sampling profiler that you can use for remote or embedded telemetry of games and other applications. It can profile CPU1, GPU2, memory allocations, locks, context switches, automatically attribute screenshots to captured frames, and much more.

While Tracy can perform statistical analysis of sampled call stack data, just like other statistical profilers (such as VTune, perf, or Very Sleepy), it mainly focuses on manual markup of the source code. Such markup allows frame-by-frame inspection of the program execution. For example, you will be able to see exactly which functions are called, how much time they require, and how they interact with each other in a multi-threaded environment. In contrast, the statistical analysis may show you the hot spots in your code, but it cannot accurately pinpoint the underlying cause for semi-random frame stutter that may occur every couple of seconds.

Even though Tracy targets frame profiling, with the emphasis on analysis of frame time in real-time applications (i.e. games), it does work with utilities that do not employ the concept of a frame. There's nothing that would prohibit the profiling of, for example, a compression tool or an event-driven UI application.

You may think of Tracy as the RAD Telemetry plus Intel VTune, on overdrive.

Real-time

The concept of Tracy being a real-time profiler may be explained in a couple of different ways:

-

The profiled application is not slowed down by profiling3. The act of recording a profiling event has virtually zero cost -- it only takes a few nanoseconds. Even on low-power mobile devices, execution speed has no noticeable impact.

-

The profiler itself works in real-time, without the need to process collected data in a complex way. Actually, it is pretty inefficient in how it works because it recalculates the data it presents each frame anew. And yet, it can run at 60 frames per second.

-

The profiler has full functionality when the profiled application runs and the data is still collected. You may interact with your application and immediately switch to the profiler when a performance drop occurs.

Nanosecond resolution

It is hard to imagine how long a nanosecond is. One good analogy is to compare it with a measure of length. Let's say that one second is one meter (the average doorknob is at the height of one meter).

One millisecond (\frac{1}{1000} of a second) would be then the length of a millimeter. The average size of a red ant or the width of a pencil is 5 or 6 mm. A modern game running at 60 frames per second has only 16 ms to update the game world and render the entire scene.

One microsecond (\frac{1}{1000} of a millisecond) in our comparison equals one micron. The diameter of a typical bacterium ranges from 1 to 10 microns. The diameter of a red blood cell or width of a strand of spider web silk is about 7 μm.

And finally, one nanosecond (\frac{1}{1000} of a microsecond) would be one nanometer. The modern microprocessor transistor gate, the width of the DNA helix, or the thickness of a cell membrane are in the range of 5 nm. In one ns the light can travel only 30 cm.

Tracy can achieve single-digit nanosecond measurement resolution due to usage of hardware timing mechanisms on the x86 and ARM architectures4. Other profilers may rely on the timers provided by the operating system, which do have significantly reduced resolution (about 300 ns -- 1 μs). This is enough to hide the subtle impact of cache access optimization, etc.

Timer accuracy

You may wonder why it is vital to have a genuinely high resolution timer5. After all, you only want to profile functions with long execution times and not some short-lived procedures that have no impact on the application's run time.

It is wrong to think so. Optimizing a function to execute in 430 ns, instead of 535 ns (note that there is only a 100 ns difference) results in 14 ms savings if the function is executed 18000 times6. It may not seem like a big number, but this is how much time there is to render a complete frame in a 60 FPS game. Imagine that this is your particle processing loop.

You also need to understand how timer precision is reflected in measurement errors. Take a look at figure 1. There you can see three discrete timer tick events, which increase the value reported by the timer by 300 ns. You can also see four readings of time ranges, marked A_1, A_2; B_1, B_2; C_1, C_2 and D_1, D_2.

Now let's take a look at the timer readings.

-

The

AandDranges both take a very short amount of time (10 ns), but theArange is reported as 300 ns, and theDrange is reported as 0 ns. -

The

Brange takes a considerable amount of time (590 ns), but according to the timer readings, it took the same time (300 ns) as the short livedArange. -

The

Crange (610 ns) is only 20 ns longer than theBrange, but it is reported as 900 ns, a 600 ns difference!

Here, you can see why using a high-precision timer is essential. While there is no escape from the measurement errors, a profiler can reduce their impact by increasing the timer accuracy.

Frame profiler

Tracy aims to give you an understanding of the inner workings of a tight loop of a game (or any other kind of interactive application). That's why it slices the execution time of a program using the frame7 as a basic work-unit8. The most interesting frames are the ones that took longer than the allocated time, producing visible hitches in the on-screen animation. Tracy allows inspection of such misbehavior.

Sampling profiler

Tracy can periodically sample what the profiled application is doing, which provides detailed performance information at the source line/assembly instruction level. This can give you a deep understanding of how the processor executes the program. Using this information, you can get a coarse view at the call stacks, fine-tune your algorithms, or even 'steal' an optimization performed by one compiler and make it available for the others.

On some platforms, it is possible to sample the hardware performance counters, which will give you information not only where your program is running slowly, but also why.

Remote or embedded telemetry

Tracy uses the client-server model to enable a wide range of use-cases (see figure 2). For example, you may profile a game on a mobile phone over the wireless connection, with the profiler running on a desktop computer. Or you can run the client and server on the same machine, using a localhost connection. It is also possible to embed the visualization front-end in the profiled application, making the profiling self-contained9.

In Tracy terminology, the profiled application is a client, and the profiler itself is a server. It was named this way because the client is a thin layer that just collects events and sends them for processing and long-term storage on the server. The fact that the server needs to connect to the client to begin the profiling session may be a bit confusing at first.

Why Tracy?

You may wonder why you should use Tracy when so many other profilers are available. Here are some arguments:

-

Tracy is free and open-source (BSD license), while RAD Telemetry costs about $8000 per year.

-

Tracy provides out-of-the-box Lua bindings. It has been successfully integrated with other native and interpreted languages (Rust, Arma scripting language) using the C API (see chapter 3.13 for reference).

-

Tracy has a wide variety of profiling options. For example, you can profile CPU, GPU, locks, memory allocations, context switches, and more.

-

Tracy is feature-rich. For example, statistical information about zones, trace comparisons, or inclusion of inline function frames in call stacks (even in statistics of sampled stacks) are features unique to Tracy.

-

Tracy focuses on performance. It uses many tricks to reduce memory requirements and network bandwidth. As a result, the impact on the client execution speed is minimal, while other profilers perform heavy data processing within the profiled application (and then claim to be lightweight).

-

Tracy uses low-level kernel APIs, or even raw assembly, where other profilers rely on layers of abstraction.

-

Tracy is multi-platform right from the very beginning. Both on the client and server-side. Other profilers tend to have Windows-specific graphical interfaces.

-

Tracy can handle millions of frames, zones, memory events, and so on, while other profilers tend to target very short captures.

-

Tracy doesn't require manual markup of interesting areas in your code to start profiling. Instead, you may rely on automated call stack sampling and add instrumentation later when you know where it's needed.

-

Tracy provides a mapping of source code to the assembly, with detailed information about the cost of executing each instruction on the CPU.

Performance impact

Let's profile an example application to check how much slowdown is introduced by using Tracy. For this purpose we have used etcpak10. The input data was a 16384 \times 16384 pixels test image, and the 4 \times 4 pixel block compression function was selected to be instrumented. The image was compressed on 12 parallel threads, and the timing data represents a mean compression time of a single image.

The results are presented in table 1. Dividing the average of run time differences (37.7 ms) by the count of captured zones per single image (16777216) shows us that the impact of profiling is only 2.25 ns per zone (this includes two events: start and end of a zone).

::: {#PerformanceImpact} Mode Zones (total) Zones (single image) Clean run Profiling run Difference

ETC1 201326592 16777216 110.9 ms 148.2 ms +37.3 ms

ETC2 201326592 16777216 212.4 ms 250.5 ms +38.1 ms

: Zone capture time cost. :::

Assembly analysis

To see how Tracy achieves such small overhead (only 2.25 ns), let's take a look at the assembly. The following x64 code is responsible for logging the start of a zone. Do note that it is generated by compiling fully portable C++.

mov byte ptr [rsp+0C0h],1 ; store zone activity information

mov r15d,28h

mov rax,qword ptr gs:[58h] ; TLS

mov r14,qword ptr [rax] ; queue address

mov rdi,qword ptr [r15+r14] ; data address

mov rbp,qword ptr [rdi+28h] ; buffer counter

mov rbx,rbp

and ebx,7Fh ; 128 item buffer

jne function+54h -----------+ ; check if current buffer is usable

mov rdx,rbp |

mov rcx,rdi |

call enqueue_begin_alloc | ; reclaim/alloc next buffer

shl rbx,5 <-----------------+ ; buffer items are 32 bytes

add rbx,qword ptr [rdi+48h] ; calculate queue item address

mov byte ptr [rbx],10h ; queue item type

rdtsc ; retrieve time

shl rdx,20h

or rax,rdx ; construct 64 bit timestamp

mov qword ptr [rbx+1],rax ; write timestamp

lea rax,[__tracy_source_location] ; static struct address

mov qword ptr [rbx+9],rax ; write source location data

lea rax,[rbp+1] ; increment buffer counter

mov qword ptr [rdi+28h],rax ; write buffer counter

The second code block, responsible for ending a zone, is similar but smaller, as it can reuse some variables retrieved in the above code.

Examples

To see how to integrate Tracy into your application, you may look at example programs in the examples directory. Looking at the commit history might be the best way to do that.

On the web

Tracy can be found at the following web addresses:

-

Homepage -- https://github.com/wolfpld/tracy

-

Bug tracker -- https://github.com/wolfpld/tracy/issues

-

Discord chat -- https://discord.gg/pk78auc

-

Sponsoring development -- https://github.com/sponsors/wolfpld/

-

Interactive demo -- https://tracy.nereid.pl/

Binary distribution

The version releases of the profiler are provided as precompiled Windows binaries for download at https://github.com/wolfpld/tracy/releases, along with the user manual. You will need to install the latest Visual C++ redistributable package to use them.

Development builds of Windows binaries, and the user manual are available as artifacts created by the automated Continuous Integration system on GitHub.

Note that these binary releases require AVX2 instruction set support on the processor. If you have an older CPU, you will need to set a proper instruction set architecture in the project properties and build the executables yourself.

First steps

Tracy Profiler supports MSVC, GCC, and clang. You will need to use a reasonably recent version of the compiler due to the C++11 requirement. The following platforms are confirmed to be working (this is not a complete list):

-

Windows (x86, x64)

-

Linux (x86, x64, ARM, ARM64)

-

Android (ARM, ARM64, x86)

-

FreeBSD (x64)

-

WSL (x64)

-

OSX (x64)

-

iOS (ARM, ARM64)

-

QNX (x64)

Moreover, the following platforms are not supported due to how secretive their owners are but were reported to be working after extending the system integration layer:

-

PlayStation 4

-

Xbox One

-

Nintendo Switch

-

Google Stadia

You may also try your luck with Mingw, but don't get your hopes too high. This platform was usable some time ago, but nobody is actively working on resolving any issues you might encounter with it.

Initial client setup

The recommended way to integrate Tracy into an application is to create a git submodule in the repository (assuming that you use git for version control). This way, it is straightforward to update Tracy to newly released versions. If that's not an option, all the files required to integrate your application with Tracy are contained in the public directory.

::: bclogo What revision should I use? You have two options when deciding on the Tracy Profiler version you want to use. Take into consideration the following pros and cons:

-

Using the last-version-tagged revision will give you a stable platform to work with. You won't experience any breakages, major UI overhauls, or network protocol changes. Unfortunately, you also won't be getting any bug fixes.

-

Working with the bleeding edge

masterdevelopment branch will give you access to all the new improvements and features added to the profiler. While it is generally expected thatmastershould always be usable, there are no guarantees that it will be so.

Do note that all bug fixes and pull requests are made against the master branch.

:::

With the source code included in your project, add the public/TracyClient.cpp source file to the IDE project or makefile. You're done. Tracy is now integrated into the application.

In the default configuration, Tracy is disabled. This way, you don't have to worry that the production builds will collect profiling data. To enable profiling, you will probably want to create a separate build configuration, with the TRACY_ENABLE define.

::: bclogo Important

-

Double-check that the define name is entered correctly (as

TRACY_ENABLE), don't make a mistake of adding an additionalDat the end. Make sure that this macro is defined for all files across your project (e.g. it should be specified in theCFLAGSvariable, which is always passed to the compiler, or in an equivalent way), and not as a#definein just some of the source files. -

Tracy does not consider the value of the definition, only the fact if the macro is defined or not (unless specified otherwise). Be careful not to make the mistake of assigning numeric values to Tracy defines, which could lead you to be puzzled why constructs such as

TRACY_ENABLE=0don't work as you expect them to do. :::

You should compile the application you want to profile with all the usual optimization options enabled (i.e. make a release build). Profiling debugging builds makes little sense, as the unoptimized code and additional checks (asserts, etc.) completely change how the program behaves. In addition, you should enable usage of the native architecture of your CPU (e.g. -march=native) to leverage the expanded instruction sets, which may not be available in the default baseline target configuration.

Finally, on Unix, make sure that the application is linked with libraries libpthread and libdl. BSD systems will also need to be linked with libexecinfo.

Static library

If you are compiling Tracy as a static library to link with your application, you may encounter some unexpected problems.

When you link a library into your executable, the linker checks if the library provides symbols needed by the program. The library is only used if this is the case. This can be an issue because one of the use cases of Tracy is to simply add it to the application, without any manual instrumentation, and let it profile the execution by sampling. If you use any kind of Tracy macros in your program, this won't be a problem.

However, if you find yourself in a situation where this is a consideration, you can simply add the TracyNoop macro somewhere in your code, for example in the main function. The macro doesn't do anything useful, but it inserts a reference that is satisfied by the static library, which results in the Tracy code being linked in and the profiler being able to work as intended.

CMake integration

You can integrate Tracy with CMake by adding the git submodule folder as a subdirectory.

# set options before add_subdirectory

# available options: TRACY_ENABLE, TRACY_LTO, TRACY_ON_DEMAND, TRACY_NO_BROADCAST, TRACY_NO_CODE_TRANSFER, ...

option(TRACY_ENABLE "" ON)

option(TRACY_ON_DEMAND "" ON)

add_subdirectory(3rdparty/tracy) # target: TracyClient or alias Tracy::TracyClient

Link Tracy::TracyClient to any target where you use Tracy for profiling:

target_link_libraries(<TARGET> PUBLIC Tracy::TracyClient)

::: bclogo CMake FetchContent When using CMake 3.11 or newer, you can use Tracy via CMake FetchContent. In this case, you do not need to add a git submodule for Tracy manually. Add this to your CMakeLists.txt:

FetchContent_Declare(

tracy

GIT_REPOSITORY https://github.com/wolfpld/tracy.git

GIT_TAG master

GIT_SHALLOW TRUE

GIT_PROGRESS TRUE

)

FetchContent_MakeAvailable(tracy)

Then add this to any target where you use tracy for profiling:

target_link_libraries(<TARGET> PUBLIC TracyClient)

:::

While using set(CMAKE_INTERPROCEDURAL_OPTIMIZATION ON) is a convenient way to enable Link-Time Optimization (LTO) for an entire project, there are situations in which this may not work due to excessive compilation times, linking issues, compiler bugs, or other reasons. For such cases, Tracy provides an option to enable Link-Time Optimization for itself using the TRACY_LTO variable during the CMake configuration stage.

Meson integration

If you are using the Meson build system, you can add Tracy using the Wrap dependency system. To do this, place the tracy.wrap file in the subprojects directory of your project, with the following content. The head revision field tracks Tracy's master branch. If you want to lock to a specific version of Tracy instead, you can just set the revision field to an appropriate git tag.

[wrap-git]

url = https://github.com/wolfpld/tracy.git

revision = head

depth = 1

Then, add the following option entry to the meson.options file. Use the name tracy_enable as shown, because the Tracy subproject options inherit it.

option('tracy_enable', type: 'boolean', value: false, description: 'Enable profiling')

Next, add the Tracy dependency to the meson.build project definition file. Don't forget to include this dependency in the appropriate executable or library definitions. This dependency will set all the appropriate definitions (such as TRACY_ENABLE) in your program, so you don't have to do it manually.

tracy = dependency('tracy', static: true)

Finally, let's check if the debugoptimized build type is enabled, and print a little reminder message if it is not. For profiling we want the debug annotations to be present, but we also want to have the code to be optimized.

if get_option('tracy_enable') and get_option('buildtype') != 'debugoptimized'

warning('Profiling builds should set --buildtype=debugoptimized')

endif

Here's a sample command to set up a build directory with profiling enabled. The last option, tracy:on_demand, is used to demonstrate how to set options in the Tracy subproject.

meson setup build --buildtype=debugoptimized -Dtracy_enable=true -Dtracy:on_demand=true

Short-lived applications

In case you want to profile a short-lived program (for example, a compression utility that finishes its work in one second), set the TRACY_NO_EXIT environment variable to 1. With this option enabled, Tracy will not exit until an incoming connection is made, even if the application has already finished executing. If your platform doesn't support an easy setup of environment variables, you may also add the TRACY_NO_EXIT define to your build configuration, which has the same effect.

On-demand profiling

By default, Tracy will begin profiling even before the program enters the main function. However, suppose you don't want to perform a full capture of the application lifetime. In that case, you may define the TRACY_ON_DEMAND macro, which will enable profiling only when there's an established connection with the server.

You should note that if on-demand profiling is disabled (which is the default), then the recorded events will be stored in the system memory until a server connection is made and the data can be uploaded11. Depending on the amount of the things profiled, the requirements for event storage can quickly grow up to a couple of gigabytes. Furthermore, since this data is no longer available after the initial connection, you won't be able to perform a second connection to a client unless the on-demand mode is used.

::: bclogo Caveats The client with on-demand profiling enabled needs to perform additional bookkeeping to present a coherent application state to the profiler. This incurs additional time costs for each profiling event. :::

Client discovery

By default, the Tracy client will announce its presence to the local network12. If you want to disable this feature, define the TRACY_NO_BROADCAST macro.

The program name that is sent out in the broadcast messages can be customized by using the TracySetProgramName(name) macro.

Client network interface

By default, the Tracy client will listen on all network interfaces. If you want to restrict it to only listening on the localhost interface, define the TRACY_ONLY_LOCALHOST macro at compile-time, or set the TRACY_ONLY_LOCALHOST environment variable to 1 at runtime.

If you need to use a specific Tracy client address, such as QNX requires, define the TRACY_CLIENT_ADDRESS macro at compile-time as the desired string address.

By default, the Tracy client will listen on IPv6 interfaces, falling back to IPv4 only if IPv6 is unavailable. If you want to restrict it to only listening on IPv4 interfaces, define the TRACY_ONLY_IPV4 macro at compile-time, or set the TRACY_ONLY_IPV4 environment variable to 1 at runtime.

Setup for multi-DLL projects

Things are a bit different in projects that consist of multiple DLLs/shared objects. Compiling TracyClient.cpp into every DLL is not an option because this would result in several instances of Tracy objects lying around in the process. We instead need to pass their instances to the different DLLs to be reused there.

For that, you need a profiler DLL to which your executable and the other DLLs link. If that doesn't exist, you have to create one explicitly for Tracy13. This library should contain the public/TracyClient.cpp source file. Link the executable and all DLLs you want to profile to this DLL.

If you are targeting Windows with Microsoft Visual Studio or MinGW, add the TRACY_IMPORTS define to your application.

If you are experiencing crashes or freezes when manually loading/unloading a separate DLL with Tracy integration, you might want to try defining both TRACY_DELAYED_INIT and TRACY_MANUAL_LIFETIME macros.

TRACY_DELAYED_INIT enables a path where profiler data is gathered into one structure and initialized on the first request rather than statically at the DLL load at the expense of atomic load on each request to the profiler data. TRACY_MANUAL_LIFETIME flag augments this behavior to provide manual StartupProfiler and ShutdownProfiler functions that allow you to create and destroy the profiler data manually. This manual management removes the need to do an atomic load on each call and lets you define an appropriate place to free the resources.

::: bclogo Keep everything consistent When working with multiple libraries, it is easy to make a mistake and use different sets of feature macros between any two compilation jobs. If you do so, Tracy will not be able to work correctly, and there will be no error or warning messages about the problem. Henceforth, you must make sure each shared object you want to link with, or load uses the same set of macro definitions.

Please note that using a prebuilt shared Tracy library, as provided by some package manager or system distribution, also qualifies as using multiple libraries. :::

Problematic platforms

In the case of some programming environments, you may need to take extra steps to ensure Tracy can work correctly.

Microsoft Visual Studio

If you are using MSVC, you will need to disable the Edit And Continue feature, as it makes the compiler non-conformant to some aspects of the C++ standard. In order to do so, open the project properties and go to C/C++,General,Debug Information Format and make sure Program Database for Edit And Continue (/ZI) is not selected.

For context, if you experience errors like "error C2131: expression did not evaluate to a constant", "failure was caused by non-constant arguments or reference to a non-constant symbol", and "see usage of '__LINE__Var'", chances are that your project has the Edit And Continue feature enabled.

Universal Windows Platform

Due to a restricted access to Win32 APIs and other sandboxing issues (like network isolation), several limitations apply to using Tracy in a UWP application compared to Windows Desktop:

-

Call stack sampling is not available.

-

System profiling is not available.

-

To be able to connect from another machine on the local network, the app needs the privateNetworkClientServer capability. To connect from localhost, an active inbound loopback exemption is also necessary14.

Apple woes

Because Apple has to be think different, there are some problems with using Tracy on OSX and iOS. First, the performance hit due to profiling is higher than on other platforms. Second, some critical features are missing and won't be possible to achieve:

-

There's no support for the

TRACY_NO_EXITmode. -

Profiling is interrupted when the application exits. This will result in missing zones, memory allocations, or even source location names.

-

OpenGL can't be profiled.

Android lunacy

Starting with Android 8.0, you are no longer allowed to use the /proc file system. One of the consequences of this change is the inability to check system CPU usage.

This is apparently a security enhancement. Unfortunately, in its infinite wisdom, Google has decided not to give you an option to bypass this restriction.

To workaround this limitation, you will need to have a rooted device. Execute the following commands using root shell:

setenforce 0

mount -o remount,hidepid=0 /proc

echo -1 > /proc/sys/kernel/perf_event_paranoid

echo 0 > /proc/sys/kernel/kptr_restrict

The first command will allow access to system CPU statistics. The second one will enable inspection of foreign processes (required for context switch capture). The third one will lower restrictions on access to performance counters. The last one will allow retrieval of kernel symbol pointers. Be sure that you are fully aware of the consequences of making these changes.

Virtual machines

The best way to run Tracy is on bare metal. Avoid profiling applications in virtualized environments, including services provided in the cloud. Virtualization interferes with the critical facilities needed for the profiler to work, influencing the results you get. Possible problems may vary, depending on the configuration of the VM, and include:

-

Reduced precision of time stamps.

-

Inability to obtain precise timestamps, resulting in error messages such as CPU doesn't support RDTSC instruction, or CPU doesn't support invariant TSC. On Windows, you can work this around by rebuilding the profiled application with the

TRACY_TIMER_QPCdefine, which severely lowers the resolution of time readings. -

Frequency of call stack sampling may be reduced.

-

Call stack sampling might lack time stamps. While you can use such a reduced data set to perform statistical analysis, you won't be able to limit the time range or see the sampling zones on the timeline.

Docker on Linux

Although the basic features will work without them, you'll have to grant elevated access rights to the container running your client. Here is a sample configuration that may enable the CPU sampling features15.

-

--privileged -

--mount "type=bind,source=/sys/kernel/debug,target=/sys/kernel/debug,readonly" -

--user 0:0 -

--pid=host

Troubleshooting

Setting the TRACY_VERBOSE variable will make the client display advanced information about the detected features. By matching those debug prints to the source code, you might be able to uncover why some of the features are missing on your platform.

Changing network port

By default, the client and server communicate on the network using port 8086. The profiling session utilizes the TCP protocol, and the client sends presence announcement broadcasts over UDP.

Suppose for some reason you want to use another port16. In that case, you can change it using the TRACY_DATA_PORT macro for the data connection and TRACY_BROADCAST_PORT macro for client broadcasts. Alternatively, you may change both ports at the same time by declaring the TRACY_PORT macro (specific macros listed before have higher priority). You may also change the data connection port without recompiling the client application by setting the TRACY_PORT environment variable.

If a custom port is not specified and the default listening port is already occupied, the profiler will automatically try to listen on a number of other ports.

::: bclogo Important To enable network communication, Tracy needs to open a listening port. Make sure it is not blocked by an overzealous firewall or anti-virus program. :::

Limitations

When using Tracy Profiler, keep in mind the following requirements:

-

The application may use each lock in no more than 64 unique threads.

-

There can be no more than 65534 unique source locations17. This number is further split in half between native code source locations and dynamic source locations (for example, when Lua instrumentation is used).

-

If there are recursive zones at any point in a zone stack, each unique zone source location should not appear more than 255 times.

-

Profiling session cannot be longer than 1.6 days (

2^{47}ns). This also includes on-demand sessions. -

No more than 4 billion (

2^{32}) memory free events may be recorded. -

No more than 16 million (

2^{24}) unique call stacks can be captured.

The following conditions also need to apply but don't trouble yourself with them too much. You would probably already know if you'd be breaking any.

-

Only little-endian CPUs are supported.

-

Virtual address space must be limited to 48 bits.

-

Tracy server requires CPU which can handle misaligned memory accesses.

Check your environment

It is not an easy task to reliably measure the performance of an application on modern machines. There are many factors affecting program execution characteristics, some of which you will be able to minimize and others you will have to live with. It is critically important that you understand how these variables impact profiling results, as it is key to understanding the data you get.

Operating system

In a multitasking operating system, applications compete for system resources with each other. This has a visible effect on the measurements performed by the profiler, which you may or may not accept.

To get the most accurate profiling results, you should minimize interference caused by other programs running on the same machine. Before starting a profile session, close all web browsers, music players, instant messengers, and all other non-essential applications like Steam, Uplay, etc. Make sure you don't have the debugger hooked into the profiled program, as it also impacts the timing results.

Interference caused by other programs can be seen in the profiler if context switch capture (section 3.16.3) is enabled.

::: bclogo Debugger in Visual Studio In MSVC, you would typically run your program using the Start Debugging menu option, which is conveniently available as a F5 shortcut. You should instead use the Start Without Debugging option, available as Ctrl + F5 shortcut. :::

CPU design

Where to even begin here? Modern processors are such complex beasts that it's almost impossible to say anything about how they will behave surely. Cache configuration, prefetcher logic, memory timings, branch predictor, execution unit counts are all the drivers of instructions-per-cycle uplift nowadays after the megahertz race had hit the wall. Not only is it challenging to reason about, but you also need to take into account how the CPU topology affects things, which is described in more detail in section 3.16.4.

Nevertheless, let's look at how we can try to stabilize the profiling data.

Superscalar out-of-order speculative execution

Also known as: the spectre thing we have to deal with now.

You must be aware that most processors available on the market18 do not execute machine code linearly, as laid out in the source code. This can lead to counterintuitive timing results reported by Tracy. Trying to get more 'reliable' readings19 would require a change in the behavior of the code, and this is not a thing a profiler should do. So instead, Tracy shows you what the hardware is really doing.

This is a complex subject, and the details vary from one CPU to another. You can read a brief rundown of the topic at the following address: https://travisdowns.github.io/blog/2019/06/11/speed-limits.html.

Simultaneous multithreading

Also known as: Hyper-threading. Typically present on Intel and AMD processors.

To get the most reliable results, you should have all the CPU core resources dedicated to a single thread of your program. Otherwise, you're no longer measuring the behavior of your code but rather how it keeps up when its computing resources are randomly taken away by some other thing running on another pipeline within the same physical core.

Note that you might want to observe this behavior if you plan to deploy your application on a machine with simultaneous multithreading enabled. This would require careful examination of what else is running on the machine, or even how the operating system schedules the threads of your own program, as various combinations of competing workloads (e.g., integer/floating-point operations) will be impacted differently.

Turbo mode frequency scaling

Also known as: Turbo Boost (Intel), Precision Boost (AMD).

While the CPU is more-or-less designed always to be able to work at the advertised base frequency, there is usually some headroom left, which allows usage of the built-in automatic overclocking. There are no guarantees that the CPU can attain the turbo frequencies or how long it will uphold them, as there are many things to take into consideration:

-

How many cores are in use? Just one, or all 8? All 16?

-

What type of work is being performed? Integer? Floating-point? 128-wide SIMD? 256-wide SIMD? 512-wide SIMD?

-

Were you lucky in the silicon lottery? Some dies are just better made and can achieve higher frequencies.

-

Are you running on the best-rated core or at the worst-rated core? Some cores may be unable to match the performance of other cores in the same processor.

-

What kind of cooling solution are you using? The cheap one bundled with the CPU or a hefty chunk of metal that has no problem with heat dissipation?

-

Do you have complete control over the power profile? Spoiler alert: no. The operating system may run anything at any time on any of the other cores, which will impact the turbo frequency you're able to achieve.

As you can see, this feature basically screams 'unreliable results!' Best keep it disabled and run at the base frequency. Otherwise, your timings won't make much sense. A true example: branchless compression function executing multiple times with the same input data was measured executing at four different speeds.

Keep in mind that even at the base frequency, you may hit the thermal limits of the silicon and be down throttled.

Power saving

This is, in essence, the same as turbo mode, but in reverse. While unused, processor cores are kept at lower frequencies (or even wholly disabled) to reduce power usage. When your code starts running20, the core frequency needs to ramp up, which may be visible in the measurements.

Even worse, if your code doesn't do a lot of work (for example, because it is waiting for the GPU to finish rendering the frame), the CPU might not ramp up the core frequency to 100%, which will skew the results.

Again, to get the best results, keep this feature disabled.

AVX offset and power licenses

Intel CPUs are unable to run at their advertised frequencies when they perform wide SIMD operations due to increased power requirements21. Therefore, depending on the width and type of operations executed, the core operating frequency will be reduced, in some cases quite drastically22. To make things even better, some parts of the workload will execute within the available power license, at a twice reduced processing rate. After that, the CPU may be stopped for some time so that the wide parts of executions units can be powered up. Then the work will continue at full processing rate but at a reduced frequency.

Be very careful when using AVX2 or AVX512.

More information can be found at https://travisdowns.github.io/blog/2020/01/17/avxfreq1.html, https://en.wikichip.org/wiki/intel/frequency_behavior.

Summing it up

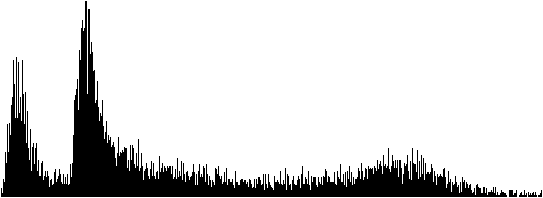

Power management schemes employed in various CPUs make it hard to reason about the true performance of the code. For example, figure 3 contains a histogram of function execution times (as described in chapter 5.7), as measured on an AMD Ryzen CPU. The results ranged from 13.05 μs to 61.25 μs (extreme outliers were not included on the graph, limiting the longest displayed time to 36.04 μs).

We can immediately see that there are two distinct peaks, at 13.4 μs and 15.3 μs. A reasonable assumption would be that there are two paths in the code, one that can omit some work, and the second one which must do some additional job. But here's a catch -- the measured code is actually branchless and always executes the same way. The two peaks represent two turbo frequencies between which the CPU was aggressively switching.

We can also see that the graph gradually falls off to the right (representing longer times), with a slight bump near the end. Again, this can be attributed to running in power-saving mode, with different reaction times to the required operating frequency boost to full power.

Building the server

Tracy uses the CMake build system. Unlike in most other programs, the root-level CMakeLists.txt file is only used to provide client integration. The build definition files used to create profiler executables are stored in directories specific to each utility.

The easiest way to get going is to build the data analyzer, available in the profiler directory. Then, you can connect to localhost or remote clients and view the collected data right away with it.

If you prefer to inspect the data only after a trace has been performed, you may use the command-line utility in the capture directory. It will save a data dump that you may later open in the graphical viewer application.

Ideally, it would be best to use the same version of the Tracy profiler on both client and server. The network protocol may change in-between releases, in which case you won't be able to make a connection.

See section 4 for more information about performing captures.

::: bclogo

How to use CMake The CMakeLists.txt file only contains the general definition of how the program should be built. To be able to actually compile the program, you must first create a build directory that takes into account the specific compiler you have on your system, the set of available libraries, the build options you specify, and so on. You can do this by issuing the following command, in this case for the profiler utility:

cmake -B profiler/build -S profiler -DCMAKE_BUILD_TYPE=Release

Now that you have a build directory, you can actually compile the program. For example, you could run the following command:

cmake --build profiler/build --config Release --parallel

The build directory can be reused if you want to compile the program in the future, for example if there have been some updates to the source code, and usually does not need to be regenerated. Note that all build artifacts are contained in the build directory. :::

::: bclogo

Caveats Tracy requires network connectivity and git to be available during the build configuration step in order to download the necessary libraries. By default, this is done for each build directory you configure with CMake. To make this requirement more reasonable, it is recommended to set a cache directory, for example:

export CPM_SOURCE_CACHE=~/.cache/cpm

With this environment variable set, the library download will be performed only once, and the cached checkouts will be used in all future CMake build directory setups, allowing offline builds. Access to the network will then only be needed if the cache directory is cleared, or if the requirements for the libraries change, for example after an upgrade to a different version of Tracy. :::

::: bclogo Important Due to the memory requirements for data storage, the Tracy server is only supposed to run on 64-bit platforms. While nothing prevents the program from building and executing in a 32-bit environment, doing so is not supported. :::

Required libraries

In most cases it is possible to build Tracy without manually installing additional libraries. All requirements are automatically downloaded by CMake.

Unix

On Unix systems, such as Linux or macOS, it is possible to link with certain common system libraries to reduce build times and resource usage through shared objects. This is optional and will be done automatically if all requirements are met. If it's not possible, there is no loss of functionality as Tracy will build and statically link these libraries anyway.

You will need to install the pkg-config utility to provide information about libraries. Then you will need to install freetype and glfw libraries.

Installation of the libraries on OSX can be facilitated using the brew package manager.

::: bclogo

Linux distributions Some Linux distributions require you to add a lib prefix and a -dev or -devel postfix to library names. You may also need to add a seemingly random number to the library name (for example: freetype2, or freetype6).

Some Linux distributions ship outdated versions of libraries that are too old for Tracy to build, and do not provide new versions by design. Please reconsider your choice of distribution in this case, as the only function of a Linux distribution is to provide packages, and the one you have chosen is clearly failing at this task. :::

Linux

There are some Linux-specific libraries that you need to have installed on your system. These won't be downloaded automatically.

For XDG Portal support in the file selector, you need to install the dbus library (and a portal appropriate for your desktop environment). If you're one of those weird people who doesn't like modern things, you can install gtk3 instead and force the GTK file selector with a build option.

Linux builds of Tracy use the Wayland protocol by default, which allows proper support for Hi-DPI scaling, high-precision input devices such as touchpads, proper handling of mouse cursors, and so on. As such, the glfw library is no longer needed, but you will need to install libxkbcommon, wayland, wayland-protocols, libglvnd (or libegl on some distributions).

If you want to use X11 instead, you can enable the LEGACY option in CMake build settings. Going this way is discouraged.

::: bclogo Window decorations Please don't ask about window decorations in Gnome. The current behavior is the intended behavior. Gnome does not want windows to have decorations, and Tracy respects that choice. If you find this problematic, use a desktop environment that actually listens to its users. :::

Using an IDE

The recommended development environment is Visual Studio Code23. This is a cross-platform solution, so you always get the same experience, no matter what OS you are using.

VS Code is highly modular, and unlike some other IDEs, it does not come with a compiler. You will need to have one, such as gcc or clang, already installed on your system. On Windows, you should have MSVC 2022 installed in order to have access to its build tools.

When you open the Tracy directory in VS Code, it will prompt you to install some recommended extensions: clangd, CodeLLDB, and CMake Tools. You should do this if you don't already have them.

The CMake build configuration will begin immediately. It is likely that you will be prompted to select a development kit to use; for example, you may have a preference as to whether you want to use gcc or clang, and CMake will need to be told about it.

After the build configuration phase is over, you may want to make some further adjustments to what is being built. The primary place to do this is in the Project Status section of the CMake side panel. The two key settings there are also available in the status bar at the bottom of the window:

-

The Folder setting allows you to choose which Tracy utility you want to work with. Select "profiler" for the profiler's GUI.

-

The Build variant setting is used to toggle between the debug and release build configurations.

With all this taken care of, you can now start the program with the F5 key, set breakpoints, get code completion and navigation24, and so on.

Embedding the server in profiled application

While not officially supported, it is possible to embed the server in your application, the same one running the client part of Tracy. How to make this work is left up for you to figure out.

Note that most libraries bundled with Tracy are modified in some way and contained in the tracy namespace. The one exception is Dear ImGui, which can be freely replaced.

Be aware that while the Tracy client uses its own separate memory allocator, the server part of Tracy will use global memory allocation facilities shared with the rest of your application. This will affect both the memory usage statistics and Tracy memory profiling.

The following defines may be of interest:

-

TRACY_NO_FILESELECTOR-- controls whether a system load/save dialog is compiled in. If it's enabled, the saved traces will be namedtrace.tracy. -

TRACY_NO_STATISTICS-- Tracy will perform statistical data collection on the fly, if this macro is not defined. This allows extended trace analysis (for example, you can perform a live search for matching zones) at a small CPU processing cost and a considerable memory usage increase (at least 8 bytes per zone). -

TRACY_NO_ROOT_WINDOW-- the main profiler view won't occupy the whole window if this macro is defined. Additional setup is required for this to work. If you want to embed the server into your application, you probably should enable this option.

DPI scaling

The graphic server application will adapt to the system DPI scaling. If for some reason, this doesn't work in your case, you may try setting the TRACY_DPI_SCALE environment variable to a scale fraction, where a value of 1 indicates no scaling.

Naming threads

Remember to set thread names for proper identification of threads. You should do so by using the function tracy::SetThreadName(name) exposed in the public/common/TracySystem.hpp header, as the system facilities typically have limited functionality.

It is also possible to specify a thread group hint with tracy::SetThreadNameWithHint(name, int32_t groupHint). This hint is an arbitrary number that is used to group threads together in the profiler UI. The default value and the value for the main thread is zero.

Tracy will try to capture thread names through operating system data if context switch capture is active. However, this is only a fallback mechanism, and it shouldn't be relied upon.

Source location data customization

Some source location data such as function name, file path or line number can be overriden with defines TracyFunction, TracyFile, TracyLine25 made before including public/tracy/Tracy.hpp header file26.

Crash handling

On selected platforms (see section 2.6) Tracy will intercept application crashes27. This serves two purposes. First, the client application will be able to send the remaining profiling data to the server. Second, the server will receive a crash report with the crash reason, call stack at the time of the crash, etc.

This is an automatic process, and it doesn't require user interaction. If you are experiencing issues with crash handling you may want to try defining the TRACY_NO_CRASH_HANDLER macro to disable the built in crash handling.

::: bclogo Caveats

-

On MSVC the debugger has priority over the application in handling exceptions. If you want to finish the profiler data collection with the debugger hooked-up, select the continue option in the debugger pop-up dialog.

-

On Linux, crashes are handled with signals. Tracy needs to have

SIGPWRavailable, which is rather rarely used. Still, the program you are profiling may expect to employ it for its purposes, which would cause a conflict28. To workaround such cases, you may set theTRACY_CRASH_SIGNALmacro value to some other signal (seeman 7 signalfor a list of signals). Ensure that you avoid conflicts by selecting a signal that the application wouldn't usually receive or emit. :::

Feature support matrix

Some features of the profiler are only available on selected platforms. Please refer to table 2 for details.

::: {#featuretable} Feature Windows Linux Android OSX iOS BSD QNX

Profiling program init (Check icon) (Check icon) (Check icon) (Poo icon) (Poo icon) (Check icon) (Check icon)

CPU zones (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon)

Locks (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon)

Plots (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon)

Messages (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon)

Memory (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Times icon)

GPU zones (OpenGL) (Check icon) (Check icon) (Check icon) (Poo icon) (Poo icon) (Times icon)

GPU zones (Vulkan) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Times icon)

GPU zones (Metal) (Times icon) (Times icon) (Times icon) (Check icon)^*b*^ (Check icon)^*b*^ (Times icon) (Times icon)

Call stacks (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Times icon)

Symbol resolution (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon)

Crash handling (Check icon) (Check icon) (Check icon) (Times icon) (Times icon) (Times icon) (Times icon)

CPU usage probing (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Check icon) (Times icon)

Context switches (Check icon) (Check icon) (Check icon) (Times icon) (Poo icon) (Times icon) (Times icon)

Wait stacks (Check icon) (Check icon) (Check icon) (Times icon) (Poo icon) (Times icon) (Times icon)

CPU topology information (Check icon) (Check icon) (Check icon) (Times icon) (Times icon) (Times icon) (Times icon) Call stack sampling (Check icon) (Check icon) (Check icon) (Times icon) (Poo icon) (Times icon) (Times icon) Hardware sampling (Check icon)^a^ (Check icon) (Check icon) (Times icon) (Poo icon) (Times icon) (Times icon) VSync capture (Check icon) (Check icon) (Times icon) (Times icon) (Times icon) (Times icon) (Times icon)

: Feature support matrix :::

(Poo icon) -- Not possible to support due to platform limitations.

^a^Possible through WSL2. ^b^Only tested on Apple Silicon M1 series

Client markup

With the steps mentioned above, you will be able to connect to the profiled program, but there probably won't be any data collection performed29. Unless you're able to perform automatic call stack sampling (see chapter 3.16.5), you will have to instrument the application manually. All the user-facing interface is contained in the public/tracy/Tracy.hpp header file30.

Manual instrumentation is best started with adding markup to the application's main loop, along with a few functions that the loop calls. Such an approach will give you a rough outline of the function's time cost, which you may then further refine by instrumenting functions deeper in the call stack. Alternatively, automated sampling might guide you more quickly to places of interest.

Handling text strings

When dealing with Tracy macros, you will encounter two ways of providing string data to the profiler. In both cases, you should pass const char* pointers, but there are differences in the expected lifetime of the pointed data.

-

When a macro only accepts a pointer (for example:

TracyMessageL(text)), the provided string data must be accessible at any time in program execution (this also includes the time after exiting themainfunction). The string also cannot be changed. This basically means that the only option is to use a string literal (e.g.:TracyMessageL("Hello")). -

If there's a string pointer with a size parameter (for example

TracyMessage(text, size)), the profiler will allocate a temporary internal buffer to store the data. Thesizecount should not include the terminating null character, usingstrlen(text)is fine. The pointed-to data is not used afterward. Remember that allocating and copying memory involved in this operation has a small time cost.

Be aware that every single instance of text string data passed to the profiler can't be larger than 64 KB.

Program data lifetime

Take extra care to consider the lifetime of program code (which includes string literals) in your application. For example, if you dynamically add and remove modules (i.e., DLLs, shared objects) during the runtime, text data will only be present when the module is loaded. Additionally, when a module is unloaded, the operating system can place another one in its space in the process memory map, resulting in the aliasing of text strings. This leads to all sorts of confusion and potential crashes.

Note that string literals are the only option in many parts of the Tracy API. For example, look at how frame or plot names are specified. You cannot unload modules that contain string literals that you passed to the profiler31.

Unique pointers

In some cases marked in the manual, Tracy expects you to provide a unique pointer in each occurrence the same string literal is used. This can be exemplified in the following listing:

FrameMarkStart("Audio processing");

...

FrameMarkEnd("Audio processing");

Here, we pass two string literals with identical contents to two different macros. It is entirely up to the compiler to decide if it will pool these two strings into one pointer or if there will be two instances present in the executable image32. For example, on MSVC, this is controlled by Configuration Properties,C/C++,Code Generation,Enable String Pooling option in the project properties (optimized builds enable it automatically). Note that even if string pooling is used on the compilation unit level, it is still up to the linker to implement pooling across object files.

As you can see, making sure that string literals are properly pooled can be surprisingly tricky. To work around this problem, you may employ the following technique. In one source file create the unique pointer for a string literal, for example:

const char* const sl_AudioProcessing = "Audio processing";

Then in each file where you want to use the literal, use the variable name instead. Notice that if you'd like to change a name passed to Tracy, you'd need to do it only in one place with such an approach.

extern const char* const sl_AudioProcessing;

FrameMarkStart(sl_AudioProcessing);

...

FrameMarkEnd(sl_AudioProcessing);

In some cases, you may want to have semi-dynamic strings. For example, you may want to enumerate workers but don't know how many will be used. You can handle this by allocating a never-freed char buffer, which you can then propagate where it's needed. For example:

char* workerId = new char[16];

snprintf(workerId, 16, "Worker %i", id);

...

FrameMarkStart(workerId);

You have to make sure it's initialized only once, before passing it to any Tracy API, that it is not overwritten by new data, etc. In the end, this is just a pointer to character-string data. It doesn't matter if the memory was loaded from the program image or allocated on the heap.

Specifying colors

In some cases, you will want to provide your own colors to be displayed by the profiler. You should use a hexadecimal 0xRRGGBB notation in all such places.

Alternatively you may use named colors predefined in common/TracyColor.hpp (included by Tracy.hpp). Visual reference: https://en.wikipedia.org/wiki/X11_color_names.

Do not use 0x000000 if you want to specify black color, as zero is a special value indicating that no color was set. Instead, use a value close to zero, e.g. 0x000001.

Marking frames

To slice the program's execution recording into frame-sized chunks33, put the FrameMark macro after you have completed rendering the frame. Ideally, that would be right after the swap buffers command.

::: bclogo Do I need this? This step is optional, as some applications do not use the concept of a frame. :::

Secondary frame sets

In some cases, you may want to track more than one set of frames in your program. To do so, you may use the FrameMarkNamed(name) macro, which will create a new set of frames for each unique name you provide. But, first, make sure you are correctly pooling the passed string literal, as described in section 3.1.2.

Discontinuous frames

Some types of frames are discontinuous by their nature -- they are executed periodically, with a pause between each run. Examples of such frames are a physics processing step in a game loop or an audio callback running on a separate thread. Tracy can also track this kind of frames.

To mark the beginning of a discontinuous frame use the FrameMarkStart(name) macro. After the work is finished, use the FrameMarkEnd(name) macro.

::: bclogo Important

-

Frame types must not be mixed. For each frame set, identified by an unique name, use either continuous or discontinuous frames only!

-

You must issue the

FrameMarkStartandFrameMarkEndmacros in proper order. Be extra careful, especially if multi-threading is involved. -

String literals passed as frame names must be properly pooled, as described in section 3.1.2. :::

Frame images

It is possible to attach a screen capture of your application to any frame in the main frame set. This can help you see the context of what's happening in various places in the trace. You need to implement retrieval of the image data from GPU by yourself.

Images are sent using the FrameImage(image, width, height, offset, flip) macro, where image is a pointer to RGBA34 pixel data, width and height are the image dimensions, which must be divisible by 4, offset specifies how much frame lag was there for the current image (see chapter 3.3.3.1), and flip should be set, if the graphics API stores images upside-down35. The profiler copies the image data, so you don't need to retain it.

Handling image data requires a lot of memory and bandwidth36. To achieve sane memory usage, you should scale down taken screenshots to a suitable size, e.g., 320\times180.

To further reduce image data size, frame images are internally compressed using the DXT1 Texture Compression technique37, which significantly reduces data size38, at a slight quality decrease. The compression algorithm is high-speed and can be made even faster by enabling SIMD processing, as indicated in table 3.

::: {#EtcSimd} Implementation Required define Time

x86 Reference --- 198.2 μs

x86 SSE4.1^a^ `__SSE4_1__` 25.4 μs

x86 AVX2 `__AVX2__` 17.4 μs

ARM Reference --- 1.04 ms

ARM32 NEON^b^ `__ARM_NEON` 529 μs

ARM64 NEON `__ARM_NEON` 438 μs

: Client compression time of 320\times180 image. x86: Ryzen 9 3900X (MSVC); ARM: ODROID-C2 (gcc).

:::

^a)^ VEX encoding; ^b)^ ARM32 NEON code compiled for ARM64

::: bclogo Caveats

-

Frame images are compressed on a second client profiler thread39, to reduce memory usage of queued images. This might have an impact on the performance of the profiled application.

-

This second thread will be periodically woken up, even if there are no frame images to compress40. If you are not using the frame image capture functionality and you don't wish this thread to be running, you can define the

TRACY_NO_FRAME_IMAGEmacro. -

Due to implementation details of the network buffer, a single frame image cannot be greater than 256 KB after compression. Note that a

960\times540image fits in this limit. :::

OpenGL screen capture code example

There are many pitfalls associated with efficiently retrieving screen content. For example, using glReadPixels and then resizing the image using some library is terrible for performance, as it forces synchronization of the GPU to CPU and performs the downscaling in software. To do things properly, we need to scale the image using the graphics hardware and transfer data asynchronously, which allows the GPU to run independently of the CPU.

The following example shows how this can be achieved using OpenGL 3.2. Of course, more recent OpenGL versions allow doing things even better (for example, using persistent buffer mapping), but this manual won't cover it here.

Let's begin by defining the required objects. First, we need a texture to store the resized image, a framebuffer object to be able to write to the texture, a pixel buffer object to store the image data for access by the CPU, and a fence to know when the data is ready for retrieval. We need everything in at least three copies (we'll use four) because the rendering, as seen in the program, can run ahead of the GPU by a couple of frames. Next, we need an index to access the appropriate data set in a ring-buffer manner. And finally, we need a queue to store indices to data sets that we are still waiting for.

GLuint m_fiTexture[4];

GLuint m_fiFramebuffer[4];

GLuint m_fiPbo[4];

GLsync m_fiFence[4];

int m_fiIdx = 0;

std::vector<int> m_fiQueue;

Everything needs to be correctly initialized (the cleanup is left for the reader to figure out).

glGenTextures(4, m_fiTexture);

glGenFramebuffers(4, m_fiFramebuffer);

glGenBuffers(4, m_fiPbo);

for(int i=0; i<4; i++)

{

glBindTexture(GL_TEXTURE_2D, m_fiTexture[i]);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_NEAREST);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_NEAREST);

glTexImage2D(GL_TEXTURE_2D, 0, GL_RGBA, 320, 180, 0, GL_RGBA, GL_UNSIGNED_BYTE, nullptr);

glBindFramebuffer(GL_FRAMEBUFFER, m_fiFramebuffer[i]);

glFramebufferTexture2D(GL_FRAMEBUFFER, GL_COLOR_ATTACHMENT0, GL_TEXTURE_2D,

m_fiTexture[i], 0);

glBindBuffer(GL_PIXEL_PACK_BUFFER, m_fiPbo[i]);

glBufferData(GL_PIXEL_PACK_BUFFER, 320*180*4, nullptr, GL_STREAM_READ);

}

We will now set up a screen capture, which will downscale the screen contents to 320\times180 pixels and copy the resulting image to a buffer accessible by the CPU when the operation is done. This should be placed right before swap buffers or present call.

assert(m_fiQueue.empty() || m_fiQueue.front() != m_fiIdx); // check for buffer overrun

glBindFramebuffer(GL_DRAW_FRAMEBUFFER, m_fiFramebuffer[m_fiIdx]);

glBlitFramebuffer(0, 0, res.x, res.y, 0, 0, 320, 180, GL_COLOR_BUFFER_BIT, GL_LINEAR);

glBindFramebuffer(GL_DRAW_FRAMEBUFFER, 0);

glBindFramebuffer(GL_READ_FRAMEBUFFER, m_fiFramebuffer[m_fiIdx]);

glBindBuffer(GL_PIXEL_PACK_BUFFER, m_fiPbo[m_fiIdx]);

glReadPixels(0, 0, 320, 180, GL_RGBA, GL_UNSIGNED_BYTE, nullptr);

glBindFramebuffer(GL_READ_FRAMEBUFFER, 0);

m_fiFence[m_fiIdx] = glFenceSync(GL_SYNC_GPU_COMMANDS_COMPLETE, 0);

m_fiQueue.emplace_back(m_fiIdx);

m_fiIdx = (m_fiIdx + 1) % 4;

And lastly, just before the capture setup code that was just added41 we need to have the image retrieval code. We are checking if the capture operation has finished. If it has, we map the pixel buffer object to memory, inform the profiler that there are image data to be handled, unmap the buffer and go to check the next queue item. If capture is still pending, we break out of the loop. We will have to wait until the next frame to check if the GPU has finished performing the capture.

Notice that in the call to FrameImage we are passing the remaining queue size as the offset parameter. Queue size represents how many frames ahead our program is relative to the GPU. Since we are sending past frame images, we need to specify how many frames behind the images are. Of course, if this would be synchronous capture (without the use of fences and with retrieval code after the capture setup), we would set offset to zero, as there would be no frame lag.

High quality capture

The code above uses glBlitFramebuffer function, which can only use nearest neighbor filtering. The use of such filtering can result in low-quality screenshots, as shown in figure [lowqualityss]. However, with a bit more work, it is possible to obtain nicer-looking screenshots, as presented in figure 4. Unfortunately, you will need to set up a complete rendering pipeline for this to work.

First, you need to allocate an additional set of intermediate frame buffers and textures, sized the same as the screen. These new textures should have a minification filter set to GL_LINEAR_MIPMAP_LINEAR. You will also need to set up everything needed to render a full-screen quad: a simple texturing shader and vertex buffer with appropriate data. Since you will use this vertex buffer to render to the scaled-down frame buffer, you may prepare its contents beforehand and update it only when the aspect ratio changes.

With all this done, you can perform the screen capture as follows:

-

Setup vertex buffer configuration for the full-screen quad buffer (you only need position and uv coordinates).

-

Blit the screen contents to the full-sized frame buffer.

-

Bind the texture backing the full-sized frame buffer.

-

Generate mipmaps using

glGenerateMipmap. -

Set viewport to represent the scaled-down image size.

-

Bind vertex buffer data, shader, setup the required uniforms.

-

Draw full-screen quad to the scaled-down frame buffer.

-

Retrieve frame buffer contents, as in the code above.

-

Restore viewport, vertex buffer configuration, bound textures, etc.

While this approach is much more complex than the previously discussed one, the resulting image quality increase makes it worthwhile.

You can see the performance results you may expect in a simple application in table 4. The naïve capture performs synchronous retrieval of full-screen image and resizes it using stb_image_resize. The proper and high-quality captures do things as described in this chapter.

::: {#asynccapture} Resolution Naïve capture Proper capture High quality

1280\times720 80 FPS 4200 FPS 2800 FPS

2560\times1440 23 FPS 3300 FPS 1600 FPS

: Frame capture efficiency :::

Marking zones

To record a zone's42 execution time add the ZoneScoped macro at the beginning of the scope you want to measure. This will automatically record function name, source file name, and location. Optionally you may use the ZoneScopedC(color) macro to set a custom color for the zone. Note that the color value will be constant in the recording (don't try to parametrize it). You may also set a custom name for the zone, using the ZoneScopedN(name) macro. Color and name may be combined by using the ZoneScopedNC(name, color) macro.

Use the ZoneText(text, size) macro to add a custom text string that the profiler will display along with the zone information (for example, name of the file you are opening). Multiple text strings can be attached to any single zone. The dynamic color of a zone can be specified with the ZoneColor(uint32_t) macro to override the source location color. If you want to send a numeric value and don't want to pay the cost of converting it to a string, you may use the ZoneValue(uint64_t) macro. Finally, you can check if the current zone is active with the ZoneIsActive macro.

If you want to set zone name on a per-call basis, you may do so using the ZoneName(text, size) macro. However, this name won't be used in the process of grouping the zones for statistical purposes (sections 5.6 and 5.7).

To use printf-like formatting, you can use the ZoneTextF(fmt, ...) and ZoneNameF(fmt, ...) macros.

::: bclogo Important Zones are identified using static data structures embedded in program code. Therefore, you need to consider the lifetime of code in your application, as discussed in section 3.1.1, to make sure that the profiler can access this data at any time during the program lifetime.

If you can't fulfill this requirement, you must use transient zones, described in section 3.4.4. :::

Manual management of zone scope

The zone markup macros automatically report when they end, through the RAII mechanism43. This is very helpful, but sometimes you may want to mark the zone start and end points yourself, for example, if you want to have a zone that crosses the function's boundary. You can achieve this by using the C API, which is described in section 3.13.

Multiple zones in one scope

Using the ZoneScoped family of macros creates a stack variable named ___tracy_scoped_zone. If you want to measure more than one zone in the same scope, you will need to use the ZoneNamed macros, which require that you provide a name for the created variable. For example, instead of ZoneScopedN("Zone name"), you would use ZoneNamedN(variableName, "Zone name", true)44.

The ZoneText, ZoneColor, ZoneValue, ZoneIsActive, and ZoneName macros apply to the zones created using the ZoneScoped macros. For zones created using the ZoneNamed macros, you can use the ZoneTextV(variableName, text, size), ZoneColorV(variableName, uint32_t), ZoneValueV(variableName, uint64_t), ZoneIsActiveV(variableName), or ZoneNameV(variableName, text, size) macros, or invoke the methods Text, Color, Value, IsActive, or Name directly on the variable you have created.

Zone objects can't be moved or copied.

::: bclogo

Zone stack The ZoneScoped macros are imposing the creation and usage of an implicit zone stack. You must also follow the rules of this stack when using the named macros, which give you some more leeway in doing things. For example, you can only set the text for the zone which is on top of the stack, as you only could do with the ZoneText macro. It doesn't matter that you can call the Text method of a non-top zone which is accessible through a variable. Take a look at the following code:

{

ZoneNamed(Zone1, true);

@\circled{a}@

{

ZoneNamed(Zone2, true);

@\circled{b}@

}

@\circled{c}@

}

It is valid to set the Zone1 text or name only in places or . After Zone2 is created at you can no longer perform operations on Zone1, until Zone2 is destroyed.

:::

Filtering zones

Zone logging can be disabled on a per-zone basis by making use of the ZoneNamed macros. Each of the macros takes an active argument ('true' in the example in section 3.4.2), which will determine whether the zone should be logged.

Note that this parameter may be a run-time variable, such as a user-controlled switch to enable profiling of a specific part of code only when required.

If the condition is constant at compile-time, the resulting code will not contain a branch (the profiling code will either be always enabled or won't be there at all). The following listing presents how you might implement profiling of specific application subsystems:

enum SubSystems

{

Sys_Physics = 1 << 0,

Sys_Rendering = 1 << 1,

Sys_NasalDemons = 1 << 2

}

...

// Preferably a define in the build system

#define SUBSYSTEMS (Sys_Physics | Sys_NasalDemons)

...

void Physics::Process()

{

ZoneNamed( __tracy, SUBSYSTEMS & Sys_Physics ); // always true, no runtime cost

...

}

void Graphics::Render()

{

ZoneNamed( __tracy, SUBSYSTEMS & Sys_Graphics ); // always false, no runtime cost

...

}

Transient zones

In order to prevent problems caused by unloadable code, described in section 3.1.1, transient zones copy the source location data to an on-heap buffer. This way, the requirement on the string literal data being accessible for the rest of the program lifetime is relaxed, at the cost of increased memory usage.

Transient zones can be declared through the ZoneTransient and ZoneTransientN macros, with the same set of parameters as the ZoneNamed macros. See section 3.4.2 for details and make sure that you observe the requirements outlined there.

Variable shadowing

The following code is fully compliant with the C++ standard:

void Function()

{

ZoneScoped;

...

for(int i=0; i<10; i++)

{

ZoneScoped;

...

}

}